The EU AI Act high-risk deadline is 2 August 2026 — and the proposed delay just failed. Here's what operations and IT leaders need to do before enforcement starts.

Table of Contents

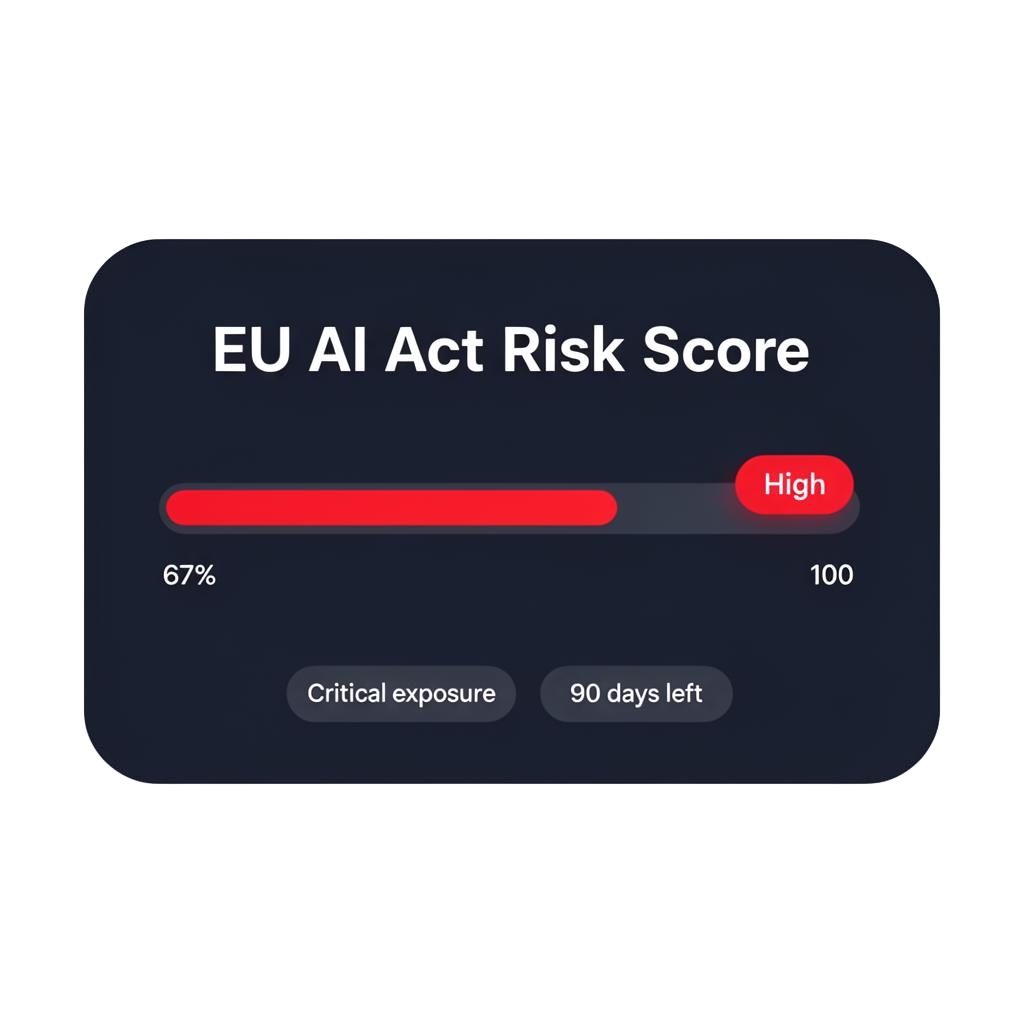

The EU AI Act's high-risk AI obligations become enforceable on 2 August 2026 — 90 days from now. A proposed delay just collapsed in trilogue negotiations on 28 April 2026. The original deadline stands. If you are deploying AI agents, RPA, or automated decision-making in your operations, this affects you directly. This is not a regulation aimed at AI developers. It is aimed at the organisations deploying AI — including yours.

The Delay Is Off the Table — For Now

In November 2025, the European Commission proposed pushing the high-risk compliance deadline back to December 2027, as part of the Digital Omnibus package. Many organisations quietly stood down their compliance preparations, assuming the extension would pass.

On 28 April 2026 — six days ago — the second political trilogue between the European Parliament, the Council, and the European Commission ended without agreement. A further trilogue is scheduled for 13 May 2026, but if the Omnibus is not formally adopted before 2 August 2026, the original AI Act provisions and their current timeline apply from that date as written.

According to DLA Piper's legal analysis published 29 April 2026, no delay has been adopted. As confirmed by Modulos AI, compliance teams should re-anchor against the original 2 August 2026 deadline.

What the AI Act Actually Requires From Deployers

Most coverage of the AI Act focuses on AI developers and model providers. But the obligations that hit on 2 August 2026 apply equally to deployers — organisations that put AI systems to work in their operations.

The most critical compliance deadline for most enterprises is August 2, 2026, when requirements for Annex III high-risk AI systems become enforceable — including AI used in employment, credit decisions, education, and customer-facing contexts. According to Secure Privacy's enterprise compliance guide, non-compliance could cost your company up to 7% of global annual revenue.

Examples of high-risk deployments that are common inside enterprise automation programmes:

- Automated CV screening or candidate ranking tools

- AI used in performance monitoring or workforce allocation

- Automated credit or invoice risk scoring

- AI agents making decisions that affect individuals without human review

- RPA workflows that feed into regulated processes

Why Automation Programmes Are Particularly Exposed

Most organisations running automation have built their programmes incrementally — a bot here, an agent there, a workflow connecting several systems. Very few have a complete, current inventory of what is actually running and what decisions it is making.

Over half of organisations lack systematic inventories of AI systems currently in production or development. Without knowing what AI exists within the enterprise, risk classification and compliance planning is impossible.

The first step toward compliance is to understand your full automation estate — every bot, agent, and automated decision running across the business.

Is Your AI Actually High-Risk? How to Find Out Quickly

The AI Act uses a four-tier risk classification. The tier that matters most for enterprises right now is high-risk — systems listed under Annex III of the regulation.

Run each of your automated systems through this short checklist:

1. Does it affect people?

If an automated system influences hiring, pay, performance, access to services, or credit — it is likely high-risk.

2. Does it make or feed decisions without consistent human review?

Automation that produces outputs a human routinely signs off on without checking is functionally making the decision itself.

3. Is it running in a regulated sector?

Finance, HR, healthcare, and public services face the highest scrutiny under Annex III.

4. Is it documented?

Technical documentation requirements demand comprehensive records of design decisions, data lineage, and testing methodologies. Organisations practising agile development with minimal documentation will struggle to create these retrospectively.

Not sure where to start? We'll guide you to August 2026.

The EU AI Act high-risk deadline is locked in for 2 August 2026 — and the proposed delay just failed. With ~90 days left, every week of delay narrows your options. Our team will map your AI and automation footprint, flag what falls under Annex III, and give you a defensible path to compliance.

What Does Good Compliance Look Like?

The AI Act is not primarily a technical regulation. It is a governance regulation. The work it demands is organisational.

Until 2 August 2026, organisations should classify all AI systems, assess whether they fall under high-risk or prohibited categories, and implement relevant measures for risk management, human oversight, data governance, and transparency. By 2 August 2026, conformity assessments should be completed, technical documentation finalised, and EU database registration for high-risk systems completed.

Four steps that get you there:

Step 1: Build your AI inventory

Map every system that uses AI or automation in a way that affects decisions — including third-party tools and embedded AI in SaaS products. This is the foundation. Nothing else is possible without it.

Step 2: Classify by risk

Work through Annex III categories for each system. For anything that looks like it might be high-risk, treat it as high-risk until you have evidence otherwise.

Step 3: Establish human oversight for high-risk systems

The AI Act requires that humans can understand, monitor, override, and stop AI systems that fall under its scope. If your current workflows do not have documented handoff points, you need to design them in.

Step 4: Start logging

Every AI decision that touches a person or a regulated process needs an audit trail. This is not optional under the Act — and it is also simply good operational practice.

The Connection to Orchestration

The AI Act's core demand is visibility and control. That is exactly what most automation programmes currently lack — and exactly what orchestration provides.

An orchestration layer that monitors all your agents and bots in one place, logs every decision, flags anomalies, and provides a clear record of what ran, when, and why is not just operationally valuable. Under the AI Act, it becomes the technical backbone of your compliance posture.

Organisations that have already invested in a unified control plane for their automation will find compliance significantly more manageable. Those running fragmented, siloed automation estates face a harder road.

The regulation is creating a forcing function for something that was already good practice: knowing what your automation is doing, being able to prove it, and being able to intervene when something goes wrong.

FAQ

Does the EU AI Act apply to my company if we are not an AI developer?

Yes. The Act places significant obligations on deployers — organisations that use AI systems in their operations — not just on the companies that build them. If you are using AI agents, automated decision tools, or RPA workflows that affect people or regulated processes, the Act applies to you.

What counts as a high-risk AI system under the Act?

High-risk systems are those listed in Annex III of the regulation. They include AI used in hiring and workforce management, credit scoring, access to essential services, and systems that affect individuals in significant ways. If an automated system influences decisions about people, it warrants careful classification.

What happens if we are not compliant by 2 August 2026?

Enforcement begins at the national level from that date. Penalties for non-compliance with high-risk system obligations can reach up to 3% of global annual turnover, and for certain violations up to 7%. National authorities will have active powers to investigate and sanction from August 2026.

We were waiting for the Digital Omnibus delay to pass. What now?

The April 28 trilogue failed to reach agreement. A further session is scheduled for 13 May 2026, but there is no guarantee of resolution before the August 2 deadline. Treat the original deadline as live and restart your compliance preparations immediately. The underlying work — inventory, classification, governance — is the same regardless of which deadline ultimately applies.

Book a Free AI Readiness Conversation

30 minutes to get an honest picture of where you stand and what a realistic first step looks like.